Research Labs

Why Some Brands Scale Ad Performance While Others Hit a Wall: The Campaigns vs Systems Divide

It is not budget. It is not creative. It is not the channel. Here is what it actually is.

Two brands. Same platforms. Similar budgets. One is scaling efficiently, watching CAC decline as spend increases. The other is stuck, refreshing creative every six weeks and cycling through agency explanations for why performance has plateaued.

The gap between them is not creative quality, channel selection, or strategic sophistication. It is a structural difference in how the advertising operation itself is built. One brand runs campaigns. The other builds a system. And once that gap opens, it compounds with every passing quarter.

This is the least discussed driver of long-term paid media performance, and it is the reason budget increases stop producing proportional returns at a specific point in every plateauing brand’s growth curve.

The Pattern That Repeats Across Every Plateauing Brand

After running over 40 million ad creative tests monthly across multi-location brands, national advertisers, and government organizations, one pattern repeats consistently. Brands that come to us after frustrating performance aren’t small or under-resourced. They have reasonable budgets, competent teams, and access to the same AI advertising tools everyone else uses. Some have already worked with well-regarded agencies.

The instinct, almost universally, is to blame the creative. Or the channel mix. Or the agency. Sometimes all three. So they refresh the creative, add a channel, switch agencies. Six months later they are in the same place, having spent more money on the same problem.

The brands that scale ad performance consistently are not doing any of these things differently. They are doing something structurally different that most brands never address.

The Two Types of Advertising Operations

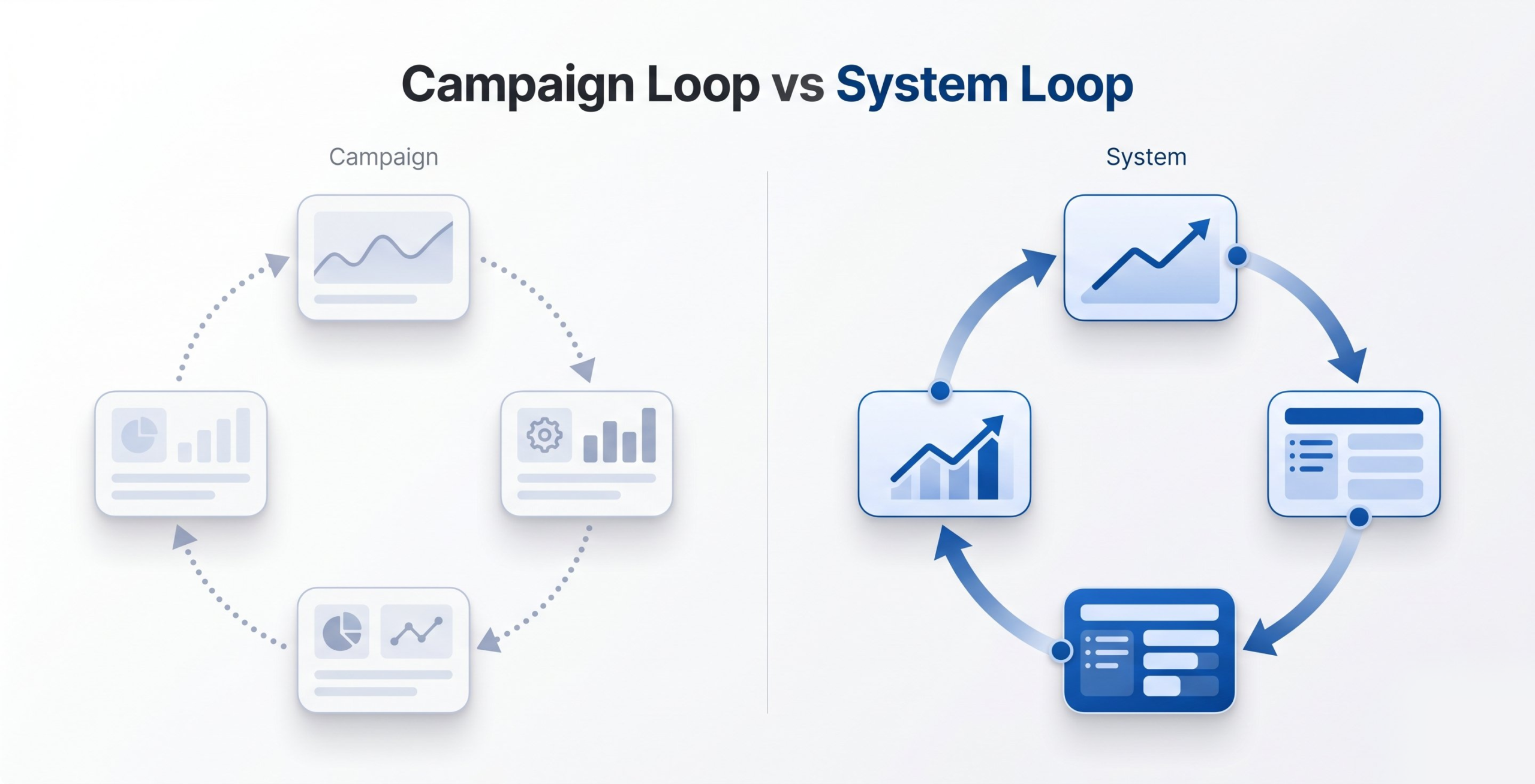

Brands either run campaigns or they build systems. The ones that build systems are the ones that compound.

A campaign operation looks like this. A brief is written. Creative is produced. Ads go live. Results come in. The winning ad gets scaled. The cycle repeats next quarter. Each campaign is largely independent of the ones before it. The knowledge generated in one campaign does not automatically improve the next one. The team gets more experienced over time, but the advertising infrastructure itself does not get smarter.

A system operation looks different. Every campaign generates structured data about which audience characteristics, creative variables, and behavioral contexts produce which outcomes. That data is stored, analyzed, and fed back into the next campaign. Over time, the system develops a model of how the brand’s audience makes decisions. Each campaign is better than the last not because the team worked harder, but because the infrastructure learned more.

The performance gap between these two approaches is not visible in the first month or the first quarter. Research from Forrester found that brands investing in systematic first-party data infrastructure see compounding performance improvements of 15 to 20 percent year over year in paid media efficiency, compared to flat or declining efficiency among brands optimizing at the campaign level only. The gap becomes undeniable at scale, when one brand’s CAC is declining as spend increases and another brand’s CAC is rising with spend and nobody can explain why.

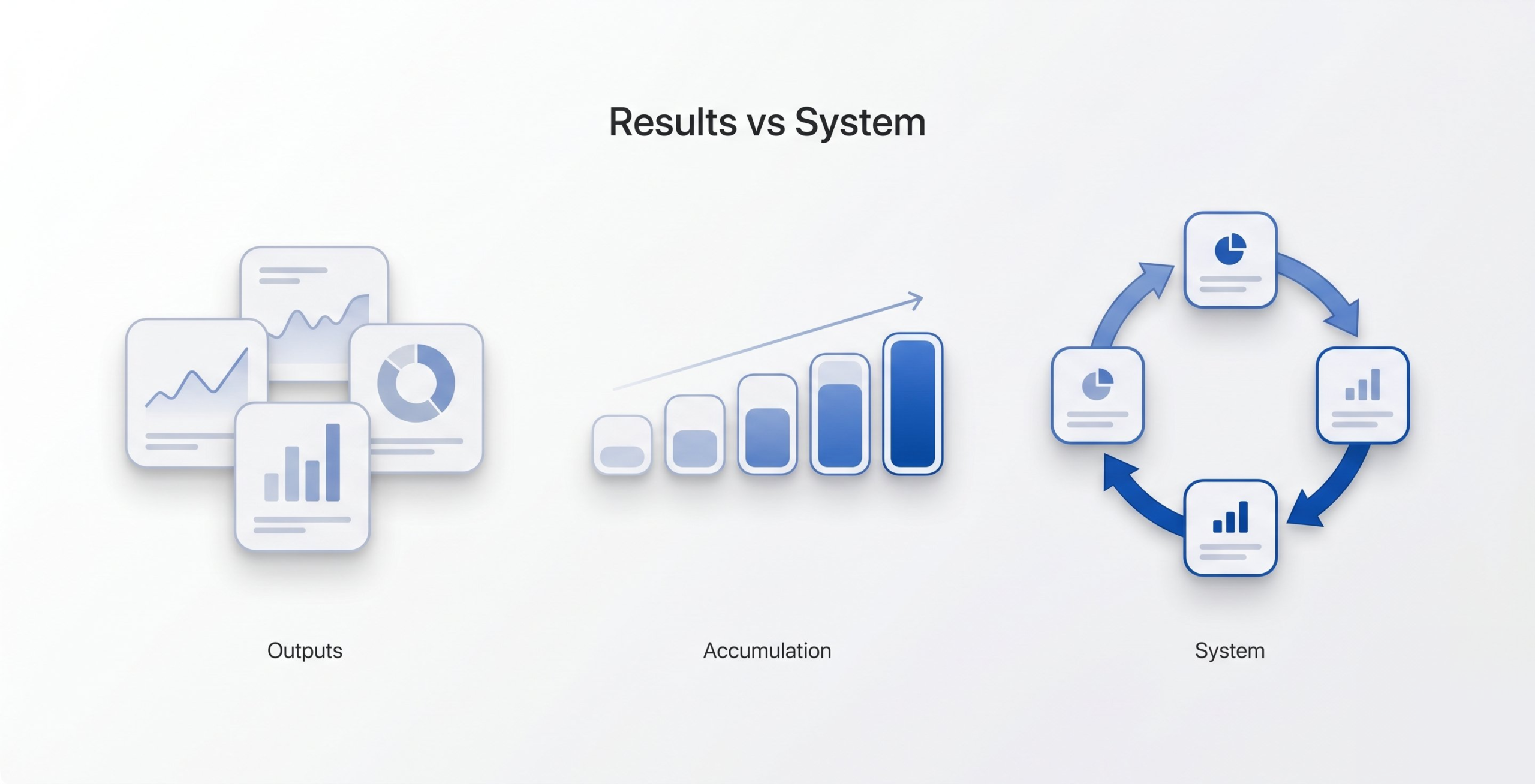

What Campaigns Produce vs What Systems Produce

A campaign produces a result. A specific ad, served to a specific audience, during a specific time window, generates a specific number of conversions at a specific cost. That result is useful for the decision immediately in front of you: did this campaign work? Should we run it again?

What a campaign does not produce is transferable knowledge. Knowing that Ad A beat Ad B in Q3 does not tell you why Ad A won, which audience segments drove the difference, or what that implies for the brief you are writing in Q1 of next year. The result exists. The insight does not.

A system produces a behavioral model. Run enough tests, across enough audience segments, with enough creative variable isolation, and you stop accumulating a library of past winners and start accumulating a map of how your audience responds to different stimuli. That map is the asset. It is more valuable than any individual creative. It does not expire at the end of a campaign cycle. And it gets more accurate with every test you run against it.

McKinsey research found that companies using behavioral data to inform creative and targeting decisions see 5 to 8 times higher marketing ROI than those relying on demographic targeting alone. That gap is not primarily a function of data quality. It is a function of whether the brand has an infrastructure designed to accumulate and apply behavioral learning, or whether it is starting from zero with every campaign regardless of how long it has been advertising.

This is the compounding data advantage. The brands that have it get better results per dollar spent as they grow. The brands that do not hit a wall at scale because they are starting from zero with every campaign, regardless of how long they have been advertising.

Why Most Brands Never Build the System

The campaign model is not wrong. For most brands at early stages, running campaigns and learning from results is the appropriate approach. The problem is that most brands never make the structural shift from campaign thinking to system thinking, even as their scale demands it.

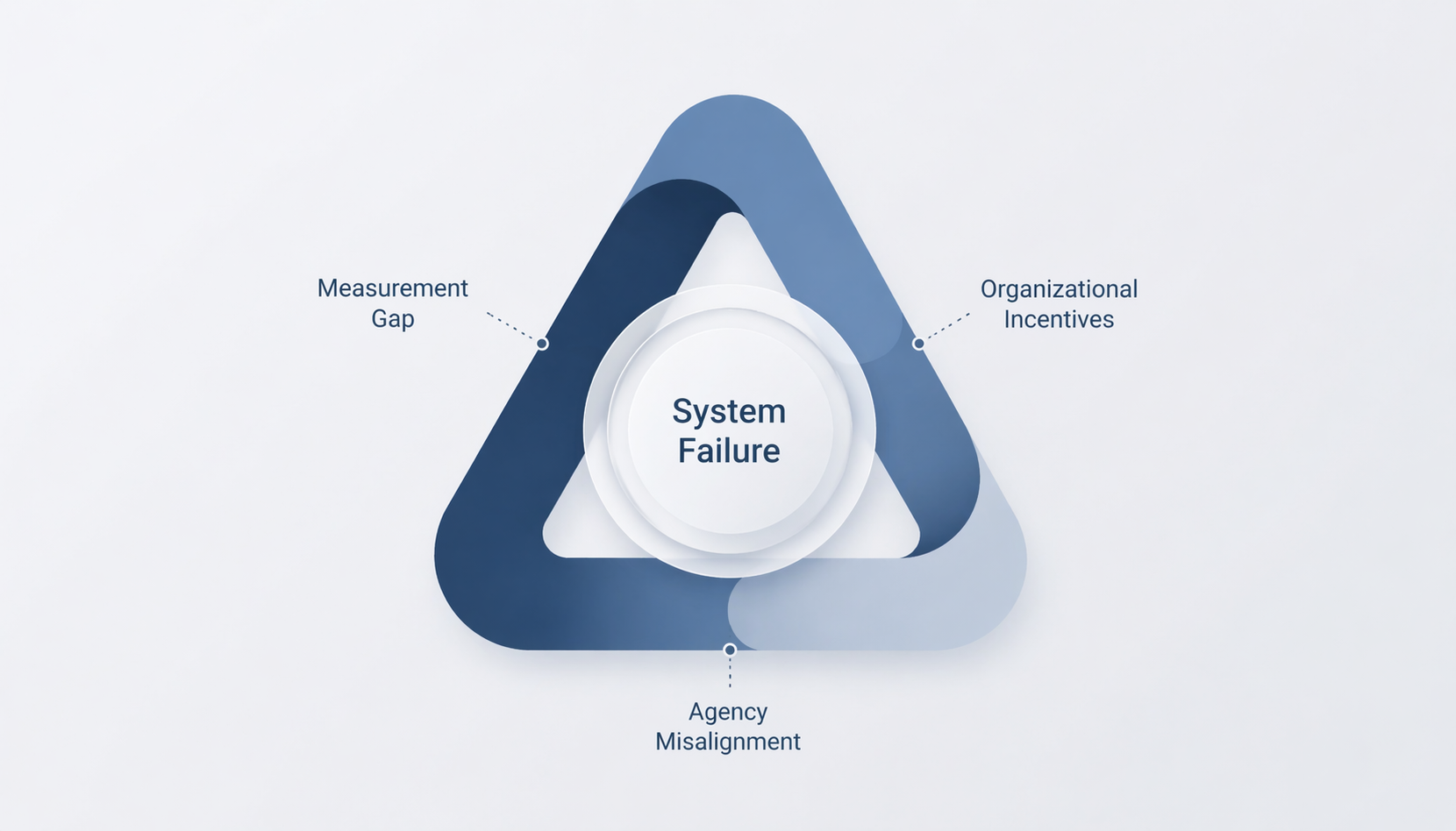

Three reasons this shift does not happen.

Measurement Gap. Campaign operations are measured on campaign metrics: ROAS, CPC, conversion rate, cost per lead. These metrics reset each campaign cycle. There is no metric on a standard dashboard that measures whether your advertising infrastructure is getting smarter over time. According to a 2024 Gartner survey, only 23 percent of marketing leaders reported having a measurement framework that tracked compounding performance improvement across campaign cycles. What does not get measured does not get managed, and the compounding data asset is almost never measured.

Organizational Incentives. Campaign thinking is comfortable because it is contained. A campaign has a start date, an end date, a budget, and a result. System thinking requires ongoing investment in data infrastructure, audience architecture, and creative testing methodology that does not produce a clean quarterly result. Most marketing organizations are not structured or incentivized to make that investment, particularly when quarterly reporting creates pressure for visible short-term performance at the expense of compounding infrastructure.

Agency Misalignment. Most advertising agencies are paid on campaign delivery. Their incentive is to produce good-looking campaign results, not to build infrastructure that would make them easier to replace. The agency that builds a compounding data system is the agency working itself out of a job if it does not keep evolving with the client. Most agencies do not operate this way, and most clients do not ask them to.

What the Architecture Shift Actually Looks Like

Moving from campaign thinking to system thinking does not require a complete rebuild of how you advertise. In practice, it requires three specific changes to how campaigns are structured and what they are expected to produce.

Cohort-level audience architecture. Instead of targeting broad demographic parameters, campaigns are structured around behaviorally defined audience cohorts: groups of people who share behavioral characteristics that predict purchase intent, not just demographic characteristics that correlate with it. The difference matters because behavioral cohorts produce transferable insight. Demographic targeting produces results that cannot be attributed to any specific audience characteristic. At Mixo Ads, we build campaigns around up to 20,000 distinct audience cohorts. The reason is not complexity for its own sake. It is that cohort-level data is the only data that compounds into a useful behavioral model.

Variable isolation in creative testing. Instead of testing complete ads against each other, you test one variable at a time against a controlled version of everything else. Headline versus headline. Image versus image. Offer structure versus offer structure. This is slower than testing full ads but produces knowledge that compounds. After 50 headline tests across five audience cohorts, you have a model for headline construction that improves every future brief. After 50 full-ad tests, you have 50 winners and no model. Research on advertising creative testing consistently shows that variable isolation produces 3 to 4 times more transferable learning per test than full-ad comparison testing, precisely because it separates signal from noise at the element level.

A performance database maintained across campaign cycles. Every test result, every cohort response pattern, every creative variable outcome is stored in a structured format that can be queried before the next brief is written. This is the infrastructure that makes the system smarter over time. Without it, the knowledge generated in each campaign evaporates when the campaign ends. Most brands have years of campaign history and almost none of it in a format that can inform the next decision.

The Wall Is Not a Budget Problem

When brands hit the performance wall, the immediate instinct is to ask how much more budget is needed to break through it. This is the wrong question, and it delays the actual fix by months at brands that had more than enough resources to solve the problem correctly.

Budget scales what is already working. If the underlying architecture is a campaign operation with no compounding data layer, more budget produces more campaigns with the same structural limitations. The CAC stays flat or rises. The performance ceiling does not move. According to Nielsen research, brands that double their digital advertising spend without restructuring audience architecture see an average efficiency decline of 25 to 40 percent in cost per acquisition within the first two quarters of scaling. More budget, worse returns.

The right question is whether the advertising operation is structured to learn. If it is, scaling budget accelerates the learning and the compounding advantage grows faster. If it is not, scaling budget accelerates the problem.

How System Thinking Extends to AI-Driven Discovery

The principle driving the campaign-versus-system divide in paid media applies more broadly than paid media alone. It applies anywhere AI systems are learning from the inputs you provide.

Answer Engine Optimization is a parallel case. Brands appearing consistently in ChatGPT, Perplexity, and Google’s AI-generated answers are not doing so because their content is longer or more keyword-stuffed than their competitors’. They are doing so because their content is structured as a system of clear, interconnected signals that AI systems can reliably extract and reassemble: consistent entity references, direct answers to specific questions, semantic relationships that hold across pages.

The parallel to paid media is exact. Brands that treat content as a series of campaign-level outputs (publish a post, get some traffic, move on) generate scattered signals that no AI system can assemble into a coherent model of what the brand does or who it serves. Brands that treat content as a system (structured, interlinked, semantically consistent) train AI systems to understand them with the same compounding effect that system-level paid media trains platform AI to find the right audience.

The brands building system infrastructure across both paid media and AI-discoverable content are compounding their advantage across every surface where customers make decisions. The ones treating either as a series of disconnected campaigns are optimizing tactically within ceilings they do not realize are there.

The Long Game

The performance gap between scaling brands and plateauing brands rarely looks dramatic in a single quarter. It becomes undeniable over two years.

The brands building compounding advantage now are making a series of unglamorous architectural decisions: cohort-level audience structures, variable-isolated creative tests, persistent performance databases. None of these decisions produce a headline metric improvement this quarter. All of them produce a structural performance advantage that is extremely difficult to close once established.

The compounding data advantage is not built in a single campaign. It is built through the consistent accumulation of structured learning across every campaign over an extended period.

The brands that understand this are not just running better campaigns. They are building an asset that makes every future campaign better than the last. The ones that do not are optimizing tactically within a ceiling they do not realize is there.

A campaign produces a specific result within a defined time window. A system accumulates structured data from every campaign and uses it to improve every future campaign. Campaigns produce outputs. Systems produce compounding behavioral models that make each subsequent campaign better than the last.

Brands plateau because their advertising operation is not structured to learn. Each campaign starts from zero rather than building on accumulated knowledge. Adding budget to a campaign-level operation multiplies the existing inefficiency instead of breaking through it. The plateau is structural, not budgetary.

Every test a system-based advertising operation runs produces transferable insight: which audience cohorts respond to which creative variables under which conditions. This insight is stored and applied to future campaigns. Over twelve to twenty-four months, the brand is optimizing against a significantly more accurate behavioral model than a brand running campaigns in isolation.

Budget scales what is already working. If the advertising operation has no compounding data layer, more budget produces more campaigns with the same structural limitations. Nielsen research shows brands doubling digital ad spend without restructuring audience architecture see efficiency decline of 25 to 40 percent in cost per acquisition within two quarters.

Cohort-level architecture groups audiences by behavioral characteristics that predict purchase intent, rather than demographic characteristics that only correlate with it. Behavioral cohorts produce transferable insight that compounds across campaigns. Demographic targeting produces results that cannot be attributed to any specific audience characteristic, so the learning does not transfer.

Variable isolation means testing one creative element at a time (headline, image, offer structure) against a controlled version of everything else, rather than testing complete ads against each other. Variable isolation produces 3 to 4 times more transferable learning per test than full-ad comparisons, because it separates signal from noise at the element level.