Research Labs

Is Your Advertising Actually Working? Why Incrementality Testing Is the Only Way to Know

Your dashboard says yes. Your attribution model says yes. Neither of them is answering the question you actually asked.

Most advertising dashboards show positive results. ROAS is above target. Conversions are tracking. Cost per acquisition looks efficient. And yet, when the fundamental question is asked directly, most marketing teams cannot answer it with confidence: would those customers have purchased without the advertising?

This is the incrementality question. It is the only question that measures whether advertising is generating new revenue or simply claiming credit for revenue that was already happening.

Incrementality testing is the measurement methodology that answers it. For any brand making significant advertising investment decisions, understanding what incrementality testing is, how it works, and why standard attribution cannot replace it is essential to making those decisions accurately. The brands that understand this distinction make structurally better budget decisions. The brands that do not continue funding campaigns based on a reporting narrative that describes what their advertising claimed rather than what it caused.

What Is Incrementality Testing in Advertising?

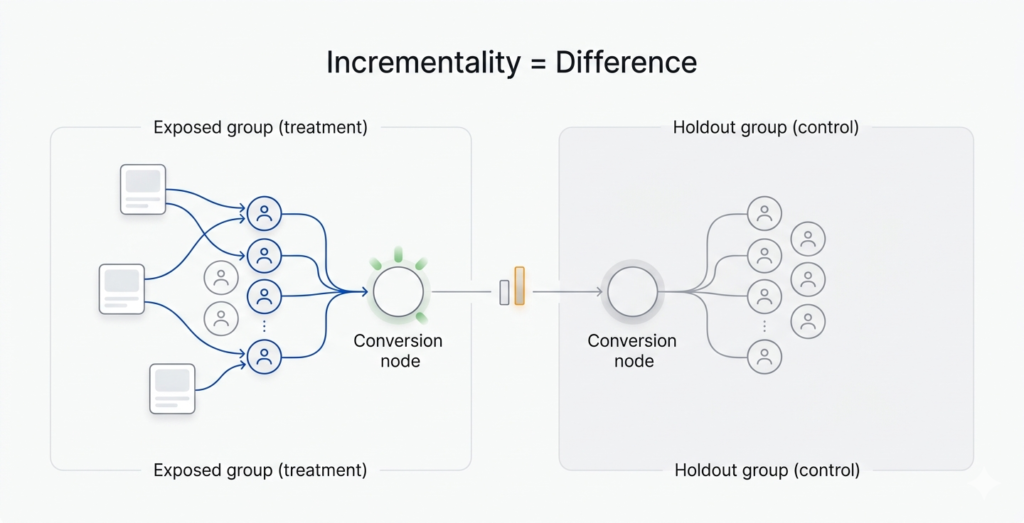

Incrementality testing measures the causal impact of advertising by comparing conversion outcomes between an audience that was exposed to a campaign and an audience that was not.

The exposed group sees the advertising as normal. The holdout group, a defined segment of the target audience, is withheld from seeing the campaign during the test period. All other conditions remain constant. At the end of the measurement window, the conversion rates of both groups are compared. The difference is the incremental lift: the proportion of conversions that were caused by the advertising rather than occurring organically.

The output of an incrementality test is not a ROAS number or an attributed conversion count. It is a lift percentage that represents the true causal contribution of the advertising to conversion behavior. This distinction is significant. A campaign can show a 4x attributed ROAS and a 15 percent incremental lift simultaneously. The attributed ROAS describes what the campaign claimed credit for. The incremental lift describes what it actually caused. These are different numbers and they lead to different budget decisions.

Why Standard Attribution Cannot Answer the Incrementality Question

What Attribution Models Actually Measure

Attribution models are designed to assign credit to advertising touchpoints within a customer’s conversion journey. Last-click attribution assigns full credit to the final touchpoint before conversion. First-click assigns it to the first. Data-driven models use algorithms to distribute credit across multiple touchpoints based on historical patterns.

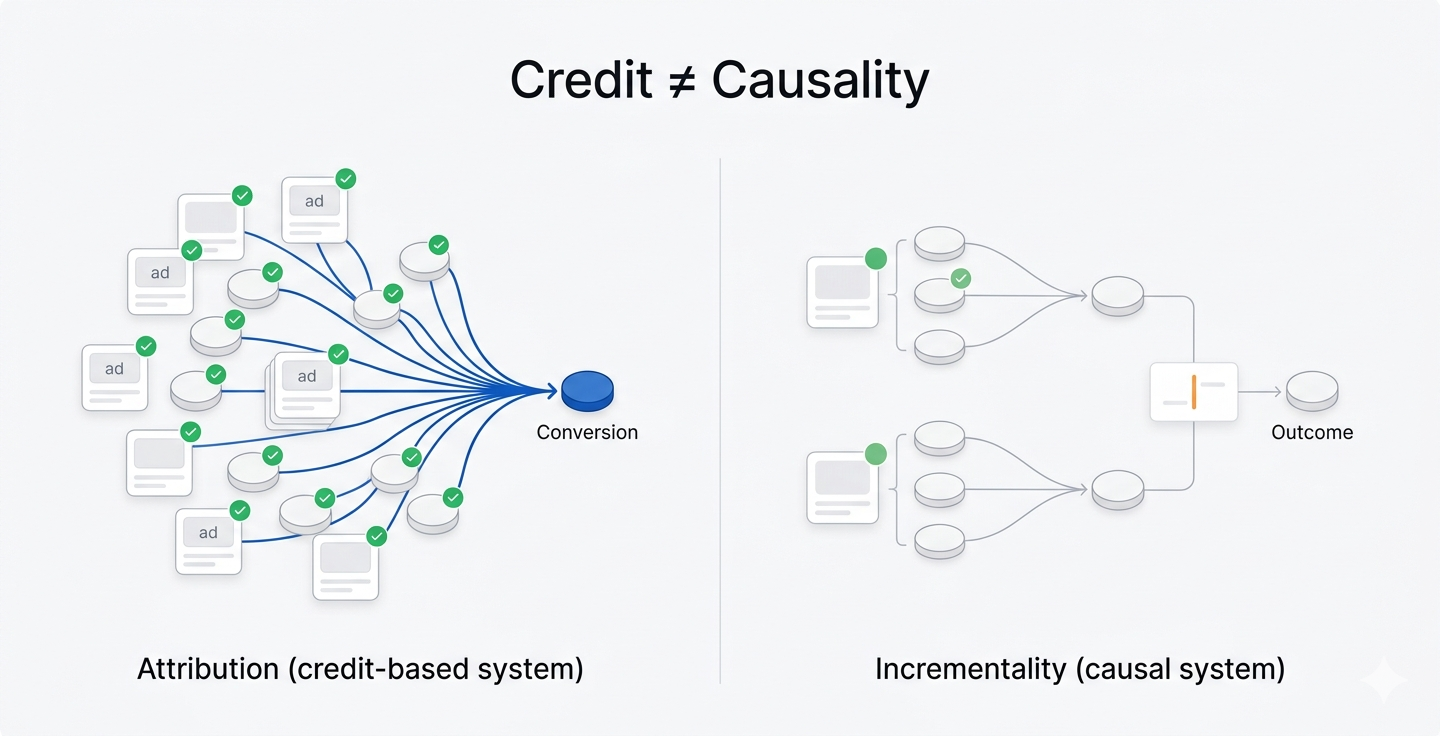

Every attribution model answers the same question: which touchpoints were present when the customer converted? None of them answer the question that determines whether advertising is genuinely effective: which touchpoints caused the conversion?

This is not a flaw in any specific attribution model. It is a structural limitation of the entire credit-assignment approach. Attribution was designed to distribute credit across a journey, not to measure whether the journey would have ended differently without specific touchpoints. The distinction between these two objectives is the entire gap between attributed performance and incremental performance.

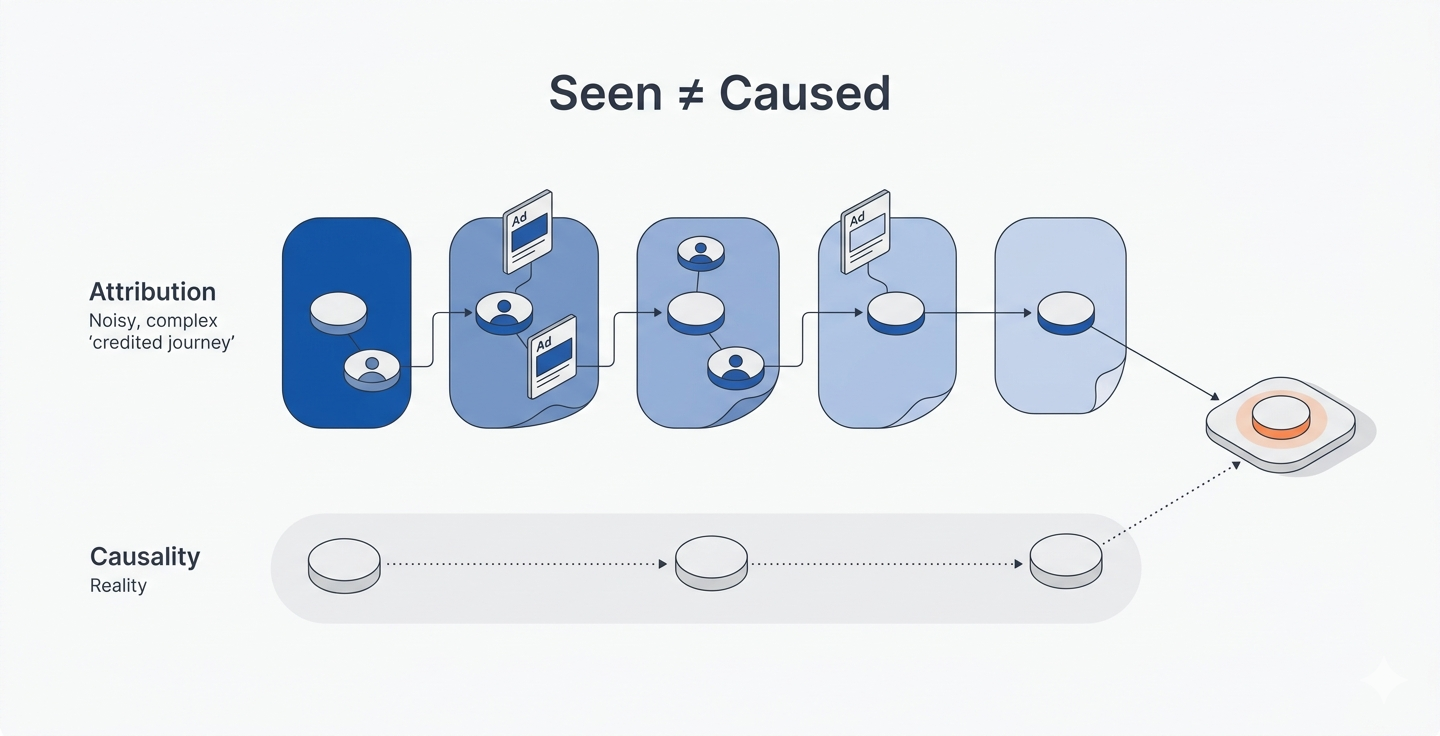

The Presence vs Causation Problem

A customer who has already decided to purchase, who has visited a product page multiple times, added items to a cart, and searched the brand directly, is going to convert. When a retargeting ad appears in that customer’s journey before they complete the purchase, the attribution model records the ad as a conversion driver. The customer was already converting. The ad was present. The attribution model does not distinguish between the two.

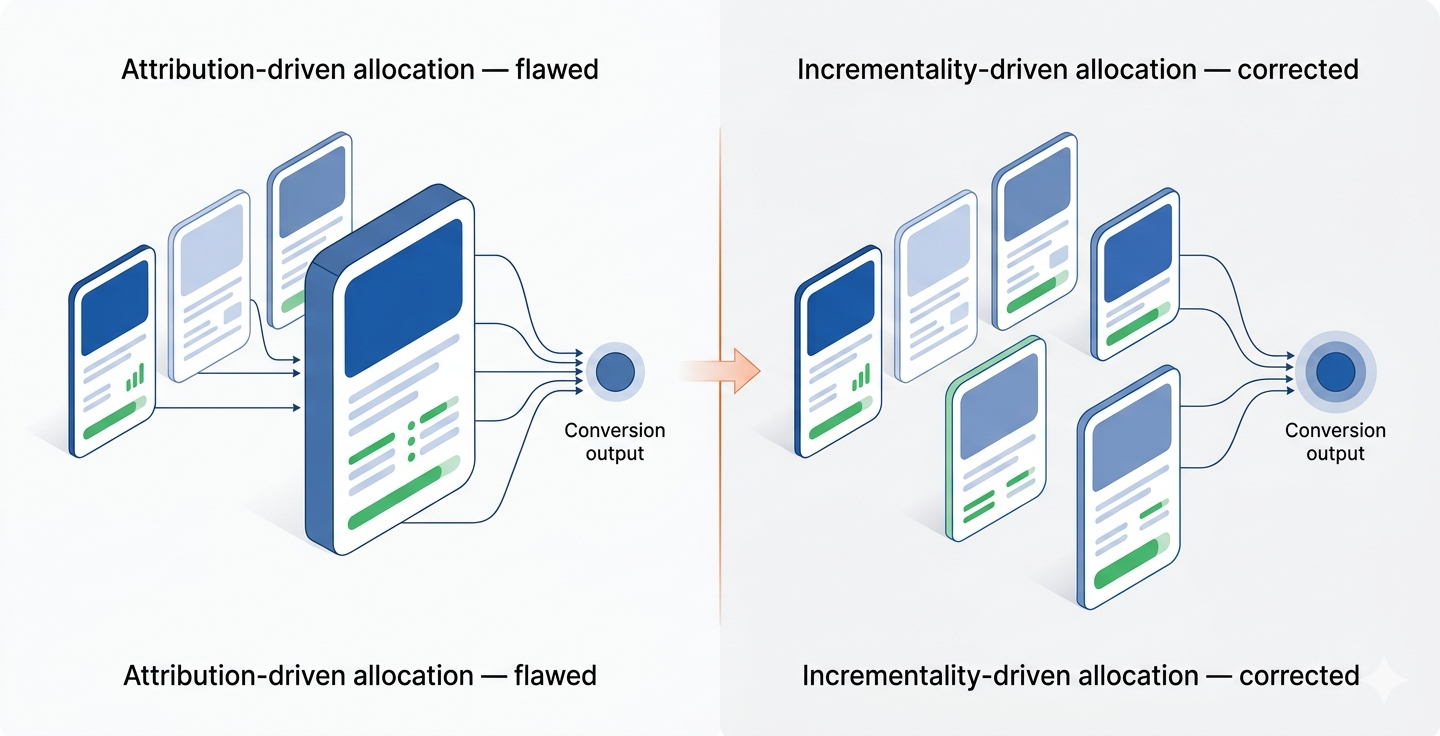

This structural limitation means that every attribution model, regardless of its sophistication, consistently over-credits campaigns that reach high-intent audiences already in the conversion funnel. Retargeting campaigns and branded search campaigns show strong attributed ROAS not because they are generating the most incremental revenue, but because they are efficiently accompanying audiences who were already converting.

The implication for budget allocation is significant. Brands optimizing toward attributed ROAS are systematically directing the largest share of their advertising investment toward the campaigns doing the least amount of genuine persuasion work. The campaigns that are actually changing behavior, reaching audiences who were not already on the way to purchase, show weaker attributed metrics and receive proportionally less investment as a result.

The Multi-Platform Attribution Overlap Problem

When a single customer is reached by campaigns across multiple platforms before converting, each platform claims credit for the conversion independently. A customer exposed to a Meta campaign on Monday, a Google display ad on Wednesday, and a branded search result on Friday generates three separate attributed conversions across three platform dashboards, all from a single purchase event.

When total attributed conversions across all active platforms are compared against actual backend conversions for the same period, the combined platform total almost always exceeds actual conversions by a significant margin. Every budget allocation decision built on platform-reported attribution is built on a figure that has been inflated by simultaneous credit claims across competing platforms.

This problem is compounded by the fact that attribution models are built by the platforms being measured. Google’s attribution model is built by Google. Meta’s is built by Meta. Both platforms have a structural incentive to build models that maximize the credit assigned to their own inventory. The advertiser uses these models to make budget allocation decisions. The decisions consistently favor the platforms producing the most favorable attribution. The budgets renew. The cycle continues.

How Incrementality Testing Works

The Holdout Methodology

The standard incrementality test uses a holdout group methodology. A defined segment of the target audience, typically between 10 and 20 percent, is excluded from seeing a specific campaign during the test period. The remaining audience is exposed to the campaign as normal. All other advertising, organic channels, and external conditions remain consistent across both groups. At the end of the measurement window, conversion rates are compared between the exposed group and the holdout group. The difference in conversion rate, expressed as a percentage, is the incremental lift attributable to the campaign.

The holdout group functions as the control group in a controlled experiment. It answers the counterfactual question: what would have happened if this campaign had not run? Without a holdout group, this question cannot be answered. With one, it can be answered with statistical reliability, provided the test is structured correctly.

What the Results Reveal

An incrementality test produces one of three outcomes.

Positive incremental lift occurs when the exposed group converts at a meaningfully higher rate than the holdout. The campaign is generating conversions that would not have occurred organically. The advertising is working in the truest sense of the term.

Minimal incremental lift occurs when the conversion rates between exposed and holdout groups are similar. The campaign is reaching audiences who were already converting. The attributed ROAS is overstating actual impact. Budget reallocation is indicated.

Negative incremental lift occurs in rare cases where the holdout group outperforms the exposed group. This indicates that the advertising is actively interfering with organic conversion behavior. It typically occurs when high-frequency campaigns create negative brand associations among audiences already predisposed to convert.

Each of these outcomes leads to a different and specific budget decision. Positive lift justifies investment and potentially scaling. Minimal lift indicates the budget should be redirected toward campaigns with genuine causal impact. Negative lift indicates the campaign should be paused and restructured before it erodes organic conversion behavior further.

Requirements for a Reliable Incrementality Test

For an incrementality test to produce statistically reliable results, four conditions must be met.

The holdout group must be large enough to accumulate a minimum of 100 conversion events during the test period. Below this threshold, statistical variance makes the result unreliable. In practice, this means the holdout group size must be calibrated against the baseline conversion rate of the audience being tested, not simply set at a fixed percentage.

The measurement window must be at least as long as the average purchase cycle for the product or service being advertised. A test window shorter than the purchase cycle will systematically understate the campaign’s incremental impact because it will not capture conversions that began during the test period but completed after it ended.

The holdout group must be cleanly isolated from the campaign being tested. If holdout users are being reached by the same campaign through a different channel or device, the holdout is contaminated and the result will be inaccurate. This is a common failure point in multi-channel environments where the same audience is reachable across multiple platforms simultaneously.

The test should be run across multiple consecutive periods before drawing definitive conclusions. A single test result can be influenced by seasonal variation, competitive activity, or audience composition differences that have nothing to do with the campaign being tested. Consistent results across multiple test periods produce a finding that is reliable enough to inform lasting budget decisions.

What Incrementality Testing Reveals About Common Campaign Types

Retargeting Campaigns

Retargeting campaigns consistently show the largest gap between attributed performance and incremental performance. Because retargeting targets audiences already in the purchase funnel, a significant proportion of attributed conversions occur among users who would have converted without the retargeting exposure.

Incrementality tests on retargeting campaigns frequently reveal that 20 to 40 percent of attributed conversions are genuinely incremental. The remaining 60 to 80 percent represent organic conversions that the retargeting campaign was present for but did not cause. Brands allocating significant budget to retargeting based on attributed ROAS are often over-investing in the campaign category with the lowest actual incremental impact.

This does not mean retargeting has no value. It means retargeting’s value is consistently overstated by attribution models and that the optimal retargeting budget is almost always lower than attributed ROAS suggests.

Branded Search Campaigns

Branded search campaigns target users who have already searched for the brand by name, indicating strong existing purchase intent. Like retargeting, branded search frequently shows strong attributed metrics and weak incremental lift, because the users being reached were already actively seeking the brand before the ad appeared.

Incrementality testing on branded search often reveals that organic search results would have captured a high proportion of these conversions at zero additional cost. The incremental contribution of paid branded search investment is frequently lower than attributed metrics suggest, particularly for brands with strong organic search presence.

Prospecting and Upper-Funnel Campaigns

Prospecting campaigns targeting audiences with no prior brand exposure typically show weaker attributed metrics but stronger incremental lift. These campaigns are reaching audiences who would not have converted organically within the measurement window. The conversion rate is lower. The attribution model gives them less credit. The incrementality test reveals that a higher proportion of their attributed conversions represent genuine new revenue generation.

Brands optimizing purely toward attributed ROAS systematically under-invest in the campaign categories producing the highest actual incremental impact. The result is a portfolio that looks efficient on paper and is gradually depleting its high-intent audience pool without building new demand to replace it.

Incrementality Testing vs A/B Testing: Understanding the Difference

Incrementality testing and A/B testing are both controlled measurement methodologies, but they answer fundamentally different questions and should not be confused.

A/B testing compares the performance of two variants, such as two creative executions or two landing pages, against each other. It answers the question: which variant performs better? It assumes the campaign itself is producing value and optimizes the delivery of that value.

Incrementality testing compares outcomes between an exposed group and a holdout group with no exposure. It answers the question: does the advertising produce conversions that would not have occurred organically? It does not assume the campaign is producing value. It tests whether that assumption is correct.

Both methodologies are valuable and serve different purposes within a measurement framework. A/B testing optimizes campaign elements within an active campaign. Incrementality testing validates whether the campaign itself is generating genuine revenue impact. A brand that runs rigorous A/B tests but never runs incrementality tests can have a highly optimized campaign with a weak incremental contribution. The A/B testing tells them they have the best version of the campaign. The incrementality test tells them whether the campaign is worth running at all.

How to Integrate Incrementality Testing Into a Media Measurement Framework

Start With the Highest-Attributed Campaigns

The campaigns showing the strongest attributed ROAS are the most important candidates for incrementality testing, because they carry the highest risk of over-attribution. Retargeting and branded search campaigns are almost always the right place to start, because the structural conditions that produce over-attribution are most concentrated in these campaign types.

Starting with the highest-attributed campaigns also produces the most organizationally impactful results. When a retargeting campaign with a 6x attributed ROAS is revealed to have a 25 percent incremental lift, the implication for budget reallocation is immediate and material. This creates a concrete case for restructuring the measurement framework across the full portfolio.

Establish a Regular Testing Cadence

A single incrementality test produces a single data point. Integrating incrementality measurement into a regular quarterly testing cadence produces a longitudinal view of which campaigns are generating genuine impact over time, accounting for seasonal variation and evolving audience behavior.

A quarterly cadence also allows incrementality results to feed directly into quarterly budget planning cycles, which is where the findings have the most organizational leverage. When incrementality data is available at the same time budget allocation decisions are being made, the results inform decisions rather than arriving after commitments have already been made.

Use Results to Recalibrate Budget Allocation

Incrementality test results should directly inform budget allocation decisions. Campaigns with high attributed ROAS and low incremental lift are candidates for budget reduction. Campaigns with lower attributed metrics and high incremental lift are candidates for increased investment. The goal is to shift spend toward campaigns generating the highest proportion of genuinely new revenue.

This reallocation typically produces a portfolio with lower overall attributed ROAS and higher actual revenue impact. The attributed ROAS declines because budget is moving away from campaigns that were efficiently claiming credit for high-intent conversions. The actual revenue impact increases because budget is moving toward campaigns that are genuinely causing new conversions that would not have occurred organically.

Combine With Marketing Mix Modeling for a Complete View

Incrementality testing operates at the campaign level, measuring the lift from specific campaigns over defined time periods. Marketing mix modeling operates at the portfolio level, measuring the contribution of all marketing activity to overall business outcomes over extended periods.

Used together, the two methodologies provide a comprehensive and accurate picture of advertising effectiveness across the full marketing investment. Incrementality testing answers the campaign-level question: is this specific campaign causing conversions? Marketing mix modeling answers the portfolio-level question: how is the overall marketing investment contributing to business outcomes across all channels and time horizons? Neither methodology alone provides a complete answer. Together, they eliminate the largest sources of measurement error that affect advertising budget decisions.

Incrementality Testing for Multi-Location Brands

For multi-location brands running advertising across geographic markets, incrementality testing offers an additional strategic application: geographic holdout testing.

By withholding advertising from specific geographic markets while maintaining campaigns in comparable markets, multi-location brands can measure the incremental impact of advertising at the market level. This approach is particularly valuable for brands with large numbers of physical locations, where online advertising impact on in-store conversion behavior is difficult to measure through standard digital attribution.

Geographic holdout tests can also reveal significant variation in advertising incrementality across markets. Some markets will show strong incremental lift, indicating that advertising is genuinely driving new customer acquisition in those locations. Others will show minimal lift, indicating that organic customer behavior in those markets is strong enough that advertising investment produces limited additional impact. This market-level incrementality data allows multi-location brands to concentrate advertising investment where it produces the highest genuine impact rather than distributing it evenly across all markets regardless of actual need.

Incrementality testing is a measurement methodology that determines the causal impact of advertising by comparing conversion rates between an audience exposed to a campaign and a holdout group that was not exposed. The difference in conversion rates between the two groups represents the incremental lift: the proportion of conversions caused by the advertising rather than occurring organically.

Attribution models measure which advertising touchpoints were present during a customer's conversion journey. They do not measure whether those touchpoints caused the conversion. Incrementality testing uses a controlled holdout methodology to isolate causal impact, separating conversions that were caused by advertising from conversions that would have occurred regardless of advertising exposure.

The holdout group must be large enough to accumulate a minimum of 100 conversion events during the test period for the result to be statistically reliable. In practice, holdout groups typically represent between 10 and 20 percent of the target audience. The exact size required depends on the baseline conversion rate of the audience being tested.

Retargeting campaigns and branded search campaigns benefit most from incrementality testing because these campaign types target high-intent audiences already in the conversion funnel, creating the conditions most likely to produce over-attribution. Incrementality testing on these campaign types most frequently reveals a significant gap between attributed and actual incremental performance.

A/B testing compares the relative performance of two variants against each other to identify which performs better. Incrementality testing compares outcomes between an exposed group and a holdout group to determine whether a campaign is generating conversions that would not have occurred organically. A/B testing optimizes within a campaign. Incrementality testing validates whether the campaign itself is producing genuine revenue impact.

Incrementality tests should be run on a regular quarterly cadence for campaigns representing significant budget allocations. A single test result can be influenced by external factors such as seasonality or competitive activity. Running the same test across multiple consecutive periods produces results reliable enough to inform lasting budget allocation decisions.